Best Practices For Airflow In Production

Optimize DAGs, manage resources, monitor effectively, and ensure stability for Airflow's reliable operation in production.

Research topics

Introduction to Airflow Monitoring

As the demand for data pipelines grows, efficient airflow Monitoring becomes crucial. Monitoring ensures that your workflows run smoothly without failures. It involves tracking various metrics that indicate the health of your workflows, such as task success rates, execution times, and resource utilization. Regular monitoring enables teams to identify issues before they escalate, facilitating smooth operations within your data pipeline environment.

Airflow Performance Optimization

Performance optimization is an ongoing challenge in production environments. By implementing airflow Performance Optimization strategies, you can significantly enhance the throughput of your data pipelines. These strategies include minimizing task execution time, optimizing SQL queries, and utilizing task parallelization to boost efficiency. Continuous assessment and improvement ensure that you keep pace with rising data loads.

Airflow Troubleshooting Techniques

When things go wrong, effective airflow Troubleshooting techniques are essential. Knowing how to debug failed tasks and understanding Airflow's logs can be the key to swift resolution. Familiarize yourself with common errors and their fixes. Implementing logging and alerting systems that notify you of any issues helps in swift diagnosis and significantly reduces downtime.

Ensuring Airflow Security

Airflow Security is often overlooked but is vital to protect sensitive data. Implement authentication and authorization mechanisms to control access to specific workflows and DAGs. Regularly updating your Airflow version and dependencies ensures that you are not exposed to known vulnerabilities. Security best practices must be integrated across your entire data pipeline to safeguard information integrity.

Effective Resource Management with Airflow

Airflow Resource Management involves optimizing the use of computational resources. Properly configuring resource limits and using pools can help manage concurrency effectively. By determining the appropriate resource allocation, you can ensure that tasks have the necessary power to run efficiently without starving other processes or overloading your system.

Mastering Airflow Scheduling

Scheduling is a core component in Airflow, primarily responsible for executing workflows at the right time. An advanced understanding of airflow Scheduling allows you to create complex DAGs that respect interdependencies and timing requirements. Experimenting with various scheduling intervals can help achieve a balance between performance and resource usage without unnecessary delays.

Building for Scalability

Airflow Scalability is important in adapting to growing data requirements. To enable scalability, design your DAGs with modularity in mind, allowing for easier adjustments as your workflow grows. Utilize features like dynamic task generation and external task sensors, which help to scale workflows as you expand your data processes alongside increasing workloads.

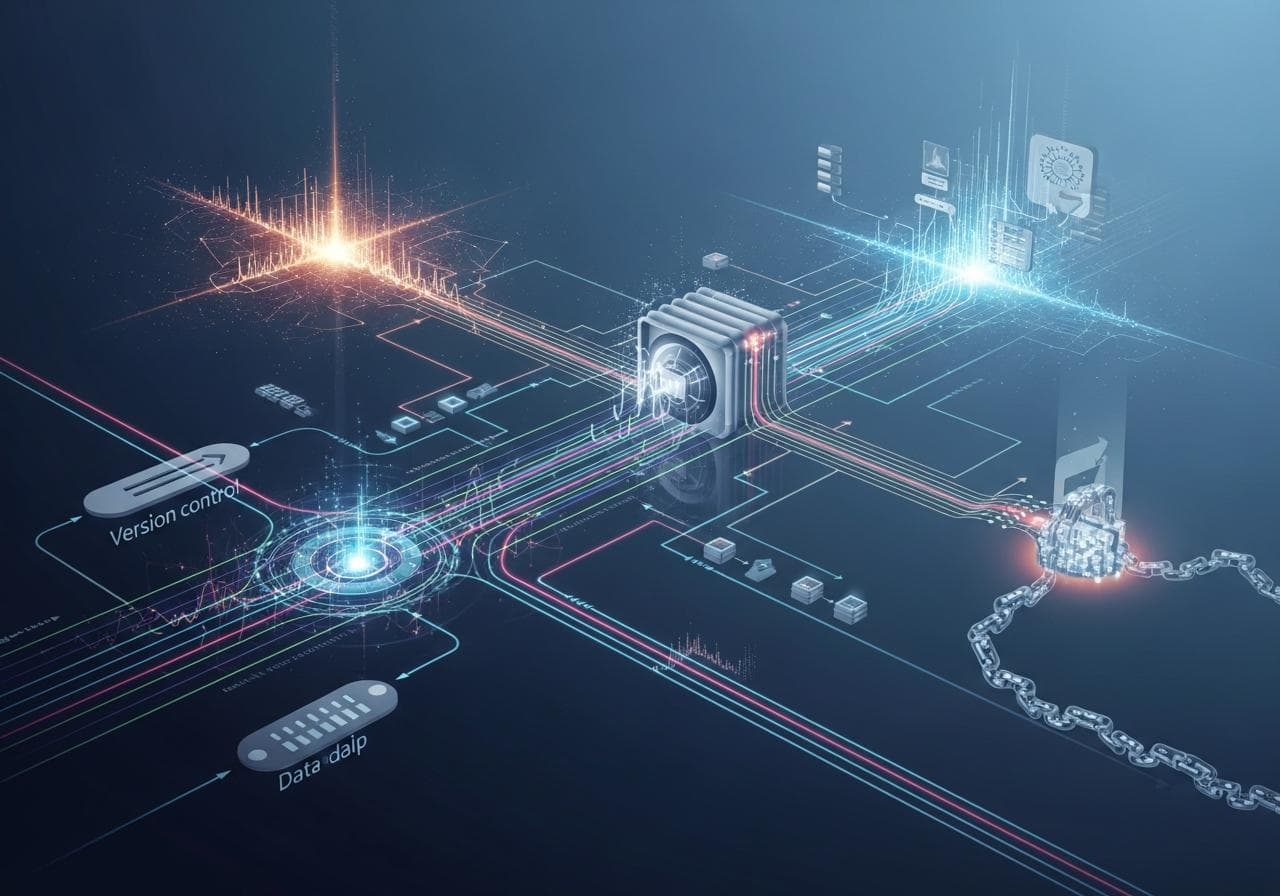

Implementing Version Control

Maintaining the integrity of your Airflow configurations through airflow Version Control is key. Using a version control system like Git can provide you with the ability to track changes, collaborate with team members, and revert to previous states when necessary. Version control isn't just about code; it also applies to configurations, ensuring that your environment is reproducible and manageable.

Adopting CI/CD Practices

Incorporating airflow Ci/cd practices can streamline your development process. By automating testing and deployment of DAGs, teams can focus on building features rather than worrying about deployment hurdles. Each code commit can trigger automated tests, ensuring that new changes do not break existing functionality, thus promoting a smoother and more efficient workflow.

Designing Effective Data Pipelines

Crafting robust airflow Data Pipelines is central to building effective workflows. Your DAG design should reflect not just the sequence of tasks but also consider fault tolerance and retry logic. Creating modular and reusable components allows for more straightforward adaptations and enhancements as your projects evolve over time.

Airflow Dag Design Strategies

Airflow Dag Design plays a pivotal role in the performance and reliability of your pipelines. Structuring your DAGs for readability and efficiency can ease the debugging process. Keeping your tasks cleanly defined and interconnected using clear naming conventions will boost clarity and ease future modifications.

The Importance of Airflow Testing

No deployment is complete without thorough airflow Testing. Ensure that all tasks function as intended under various scenarios, including edge cases. Automated testing for your DAGs before deployment is crucial. This proactive approach can prevent issues down the line and ensure that your workflows run smoothly in production.

Conclusion

To sum it up, adopting these best practices not only enhances the performance of your Airflow environment but ensures a sustainable and energetic ecosystem for your data workflows. For further reading on airflow management, check out the article on airflow management education or navigate through airflow management systems. You can also find academic insights on airflow management in larger systems. Happy piping!

Posts Relacionados

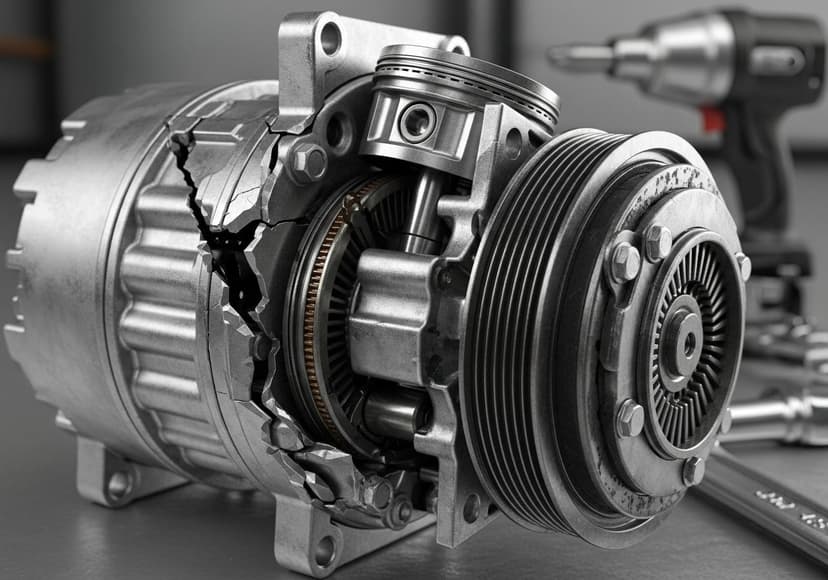

Ac Compressor Common Problems

Common issues involve refrigerant leaks, clutch failure, and internal damage impacting cooling efficiency and leading to repairs.

Ac Drain Cleaning Diy Guide

Regularly clean your AC drain to prevent clogs, maintain efficiency, and avoid potential water damage.

Ac Efficiency Maximizing Performance

Improve air conditioning efficiency by optimizing components. Achieving peak performance ensures reduced energy consumption.